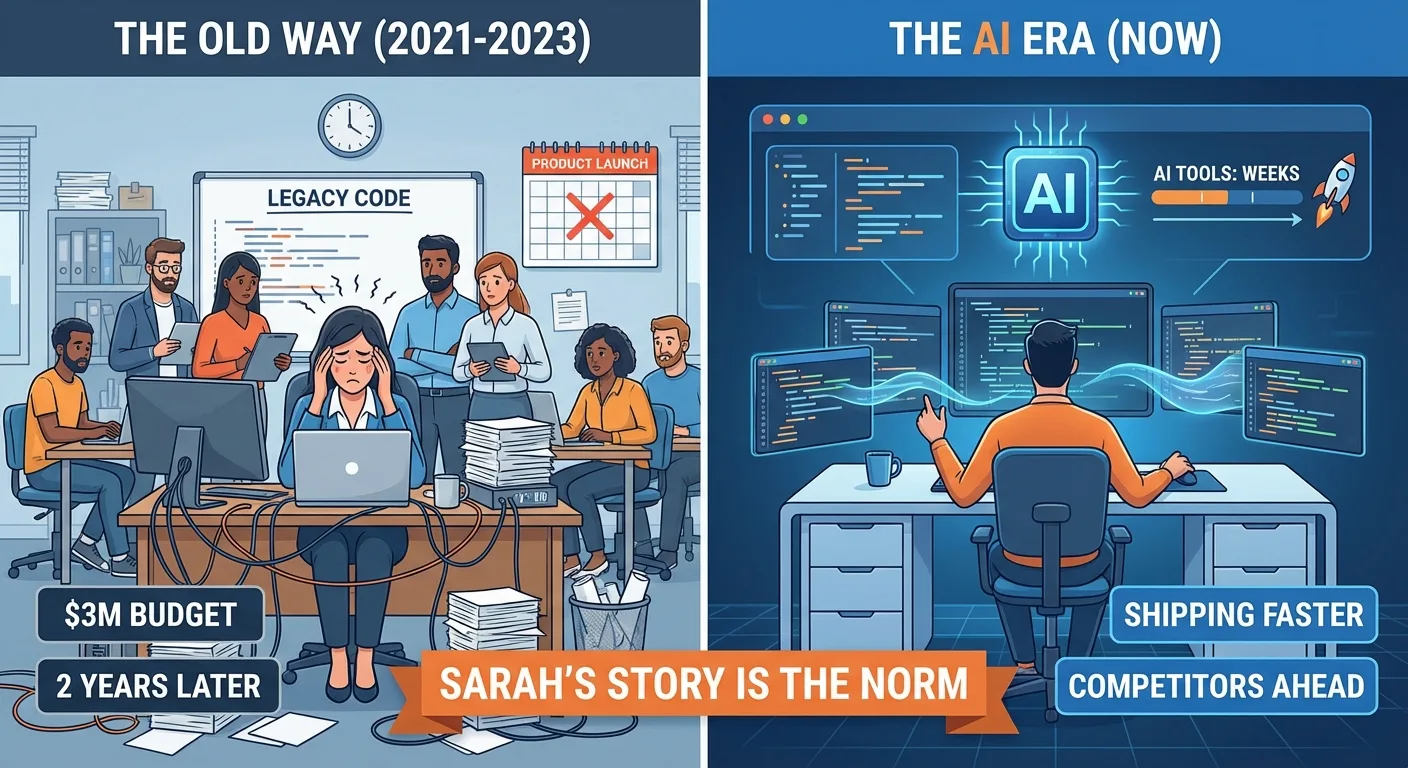

The $3 Million Mistake

Sarah hired her first developer in 2021. Then a second. Then a team lead. By 2023, she had twelve people and a $3 million annual engineering budget.

The problem? Her product still wasn't ready.

She'd spent two years building what AI tools can now generate in weeks. Her team was maintaining legacy code while competitors using AI were shipping faster, cheaper, and with fewer defects.

Sarah's story isn't unique. According to a 2023 Standish Group CHAOS report, only 31% of software projects are delivered on time and on budget. The rest bleed money through scope creep, miscommunication, and the sheer friction of large teams trying to coordinate. AI hasn't just introduced a new tool. It has exposed how much of traditional software development was always inefficient.

The Old Model Is Dead

For decades, building software meant hiring developers. Lots of them. Frontend, backend, DevOps, a tech lead to keep everyone aligned. Each one cost between $100K and $200K per year in total compensation, according to Levels.fyi salary data. In major metro areas, senior engineers regularly exceed $250K.

That model made sense when human cognition was the only path from idea to working code.

It doesn't anymore.

A 2024 McKinsey study found that generative AI tools can complete coding tasks 35% to 45% faster than traditional methods. GitHub's own research on Copilot showed developers completing tasks 55% faster, with the most significant gains in boilerplate and repetitive work. Tools like Cursor, v0, and Claude now generate production-ready code from plain English descriptions. What used to require a team of five can often be accomplished by one person who knows how to direct AI effectively.

The bottleneck has shifted. It's no longer about typing code. It's about knowing what to build and why.

What Actually Matters Now

Mike runs a SaaS company with $8 million in revenue. His "engineering team" is three people. One technical leader fluent in AI tools. Two product-minded operators who translate business needs into AI prompts and specifications.

They ship faster than competitors with teams of twenty.

What changed is fundamental. Mike stopped hiring people to write code. He hired people who could think strategically about product and wield AI as a force multiplier.

His technical leader rarely writes code by hand. She uses AI to generate it, reviews the output, and iterates. This shift toward AI agents handling the execution layer is redefining what engineering teams look like. Her focus is architecture and product decisions. The cognitive work that AI cannot yet replicate. This tracks with research from Harvard Business School, which found in a 2023 study that AI improved performance on creative and strategic tasks by up to 40% when used as a collaborator, but degraded outcomes when users deferred to it entirely on complex judgment calls.

The product people on Mike's team don't hold computer science degrees. They understand customers. They translate needs into clear specifications. AI handles the conversion to code. This distinction matters more than most founders realize.

The New Playbook

The traditional approach looks like this. Hire five developers. Wait six months. Hope they build what you actually need. Spend another six months fixing what went wrong.

The AI-native approach looks different. Hire one technical leader who knows AI tools. Pair them with someone who deeply understands your customers. Prototype in days. Ship in weeks. Iterate based on real user feedback.

The math is stark. A traditional team might cost $750K per year in salaries alone, before you account for recruiting fees (typically 15% to 25% of first-year salary per hire), onboarding time, and management overhead. An AI-native team costs roughly $250K and, based on emerging productivity benchmarks, moves two to four times faster.

But speed and cost aren't even the most significant advantages.

The ROI Math

Let's stop being vague and put real numbers on this, because the gap between the two models is wider than most executives expect when they first see it laid out.

A typical five-person engineering team at a growth-stage company in 2024 looks like this: two mid-level developers at $130K each, one senior developer at $165K, one DevOps engineer at $140K, and one tech lead at $190K. Total base salary: $755K. Add 25% for benefits, payroll taxes, and equipment, and you're at roughly $944K per year before you spend a dollar on recruiting. A five-person hire at average recruiting fees of 20% of first-year salary costs another $151K upfront. Call it $1.1M in year one to staff a team that will take three to four months to reach full productivity.

Now model the AI-native alternative. One senior AI Engineering Lead at $185K. One AI Product Specialist at $110K. One part-time QA Lead at $60K (contract). Total: $355K per year. Recruiting costs for three hires run about $59K. Year-one all-in: $414K. That's a $686K difference in year one alone.

But the cost comparison only tells half the story. Understanding the full AI automation ROI picture means looking beyond salary lines. The velocity difference is where the real leverage lives.

The traditional five-person team will spend roughly 18% of their time in meetings and coordination, according to research from the Atlassian State of Teams report. Another 42% goes to maintenance and technical debt, per the Stripe study cited earlier. That leaves maybe 40% of paid engineering time actually building new product. On a $944K salary base, you're getting about $378K worth of net-new feature development per year.

The AI-native team runs a different equation. Coordination overhead drops sharply with fewer people. AI handles most of the repetitive implementation work. The human time goes almost entirely to architecture, product judgment, and reviewing AI output. In practice, three people using AI tools routinely match or exceed the feature output of five traditional developers, at roughly 45 cents on the dollar.

One more factor that rarely shows up in these calculations: time to first ship. The traditional team needs 90 to 120 days before they're functional as a unit. The AI-native team can have a working prototype in front of customers within two weeks of their first day. In fast-moving markets, that head start compounds in ways that are almost impossible to recover from if you're the competitor still waiting for your senior hire to pass the background check.

The Real Benefit That Gets Overlooked

Traditional teams accumulate friction. They build technical debt. A 2022 study by Stripe estimated that developers spend 42% of their time dealing with technical debt and maintenance rather than building new features. Traditional teams create systems only they understand. They become institutional gatekeepers, often without intending to.

AI-native teams stay flexible. When AI writes most of the code, there's less ego attached to it. Bad code gets rewritten without drama. New approaches get tested without political resistance. Psychologists call this "psychological ownership." When people invest significant personal effort into creating something, they resist changing it even when evidence suggests they should.

Jessica experienced this firsthand. Her traditional team spent three months building a feature that customers didn't want. She asked them to pivot. They resisted. Too much work already invested. Too much pride in the existing approach. The sunk cost fallacy, well-documented in behavioral economics research by Kahneman and Tversky, played out exactly as the theory predicts.

Her new AI-native team rebuilt the entire feature in four days based on customer feedback. No emotional attachment. No sunk cost trap. Just fast iteration toward what users actually needed.

What You Actually Need

Stop thinking about hiring developers. Start thinking about hiring strategic technologists.

You need someone who can understand your business model and customer needs at a deep level. Someone who asks the right questions before building anything. Who can direct AI tools to generate solutions, then review and iterate on the output with judgment and taste. Someone capable of making architectural decisions that AI isn't ready to handle. And who knows when to override AI's suggestions versus when to trust them.

That's a fundamentally different skill set from traditional development. It combines strategic thinking, product instinct, and technical judgment. The actual coding? That becomes the easiest part. A 2024 report from the World Economic Forum listed "AI and big data" as the fastest-growing skill category globally, but emphasized that the most valuable professionals would be those who combine AI fluency with domain expertise and critical thinking.

The AI-Native Hiring Framework

Most founders I talk to understand conceptually that the hiring model has changed, but freeze when I ask them to describe the org chart they're building toward. So here's a concrete framework for what an AI-native engineering function actually looks like, with real titles, responsibilities, and compensation ranges drawn from what I'm seeing in the market right now.

AI Engineering Lead (also called Technical Architect or AI Systems Lead): This is your most important hire. They set the technical direction, make architectural decisions, and serve as the human judgment layer on top of everything AI generates. They're fluent in multiple AI coding tools, understand when to trust AI output and when to rewrite it, and can translate business requirements into technical specifications that AI can execute reliably. Compensation in 2025: $155K to $210K base, depending on location and experience. You want someone who has shipped production systems using AI-assisted development, not someone who just read about it. Ask them to show you a codebase they built with AI. The quality of that code will tell you everything.

AI Product Specialist (also called Technical Product Manager or AI Product Operator): This person bridges customers and code. They gather requirements, write specifications clear enough for both humans and AI to act on, run user research, and handle the feedback loop between what customers want and what the engineering lead builds. They don't need to write code. They need to understand systems well enough to know what's feasible and communicate that clearly. Compensation: $90K to $135K. The best candidates often come from customer success, product management, or operations backgrounds with some technical exposure. A CS degree is not a disqualifier but it's also not a requirement.

AI QA and Reliability Lead: Someone has to own the testing and quality layer. AI-generated code ships fast, which means bugs can also propagate fast if there's no systematic review process. This role builds and maintains the test suite, reviews AI output for security issues, catches the edge cases that slip through, and owns deployment processes. Compensation: $80K to $120K. Many good candidates here have traditional QA backgrounds and are actively learning AI tools. They're often undervalued by companies chasing the flashier roles, which means you can recruit them before the market catches up.

Domain Expert (fractional or part-time): Depending on your industry, you may need someone with deep domain knowledge who can validate that what AI is generating actually fits the regulatory, compliance, or professional standards of your space. Healthcare tech, fintech, and legal tech all need this. Budget $50K to $100K per year for a fractional arrangement. This is not a technical role. It's a knowledge boundary role, someone who knows when the AI is producing output that looks right but is subtly wrong in ways that matter.

What you'll notice is that this four-role structure runs $375K to $565K per year in compensation, and it replaces what used to require eight to ten people. The math works because AI handles the execution layer, freeing human time for the judgment layer where the real value is created. For interviews, I recommend giving candidates a live prompt engineering challenge. Give them a real business problem and watch how they break it down into a specification for AI. The quality of their prompt is more predictive of success than their coding portfolio.

The Transition Period

David had a team of eight developers when he discovered AI tools. He didn't fire everyone. That would have been shortsighted and destructive.

Instead, he invested in retraining. He showed his team how to use AI to multiply their output. Half of them adapted and became remarkably productive. A quarter struggled but found adjacent roles where their skills still applied. A quarter left on their own terms.

Within six months, his team of four was outshipping the old team of eight. And they reported higher job satisfaction because they weren't grinding through tedious implementation work anymore. This mirrors findings from a 2023 Microsoft Research study, which found that developers using AI assistants reported higher satisfaction and less frustration, primarily because AI absorbed the monotonous tasks they least enjoyed.

The developers who thrived were the ones who already thought like product people. They saw AI as leverage, not as a threat to their identity.

What AI Can't Replace (Yet)

I want to be precise here, because the nuance matters. There are real categories of technical work where AI tools genuinely fall short today, and if your business operates in those spaces, the advice in this post needs to be weighted differently.

Novel algorithm development is the clearest example. If you're building a new machine learning architecture, designing a compression algorithm for a new data type, or working on problems that don't have well-established solutions in the existing literature, AI tools are not going to carry you. They'll generate plausible-looking approaches that are often subtly wrong or optimized for the wrong objective. The 2024 Google DeepMind analysis found that AI code generation tools perform well on tasks represented in training data but drop sharply in quality when the problem space is genuinely novel. For frontier research, you still need humans who can reason from first principles.

Low-level systems programming is another real gap. Writing kernel modules, device drivers, embedded firmware, and real-time operating system components requires a depth of hardware knowledge and precision that current AI tools don't reliably deliver. The failure modes in this space are severe, and AI hallucinations in a kernel driver don't just crash a process, they crash a machine. Companies building hardware products, embedded systems, or anything that touches the metal directly still need specialists who have spent years in this space.

Security-critical code deserves its own category. AI tools can write code that passes functional tests but introduces subtle vulnerabilities, particularly in cryptographic implementations, authentication flows, and authorization logic. A 2023 study from Stanford found that GitHub Copilot produced insecure code suggestions in roughly 40% of security-relevant scenarios. AI-generated code should never ship to production in security-critical paths without review from a human with adversarial thinking skills, someone who asks "how would I attack this?" not just "does this work?"

Performance optimization at the extreme end is also genuinely hard for AI. Getting an application from good performance to exceptional performance often requires profiling at the instruction level, understanding CPU cache behavior, and making tradeoffs that require deep knowledge of the specific hardware and workload profile. AI can suggest optimizations, but it often misses the interactions between components that matter most at the margin.

Finally, cross-system architectural decisions that require institutional context are beyond what AI can handle. Deciding how your data model should evolve over five years given your customer segment, regulatory exposure, and competitive positioning is not a coding problem. It's a strategic judgment call. AI can model options and surface tradeoffs, but the decision requires someone who understands your business at a level no AI tool currently has access to.

Here's the honest truth: most growth-stage software companies don't operate anywhere near these limits. Building a SaaS product, a marketplace, an e-commerce platform, or an internal tool? AI handles your needs reliably. If you're building the infrastructure that AI runs on, the answer is more complicated.

Case Study: The Agency That Switched

This story is one I watched play out over about eighteen months, and it's the most concrete example I can point to of what the transition actually looks like in practice.

Meridian Digital (not their real name) was a web development agency with fourteen full-time developers, two project managers, and an annual payroll of around $2.4 million. They built custom websites and web applications for mid-market clients, mostly in the $50K to $200K project range. Their margins were thin, roughly 19%, which is typical for the agency model when you're paying full-time salaries to staff through feast and famine cycles.

In early 2023, their founder James started experimenting with AI tools personally. He built a client prototype in a weekend using Cursor and Claude that would have taken his team three weeks. He didn't announce this to his staff. He spent three months quietly auditing which parts of his team's work AI could realistically handle and which parts still required human expertise.

His conclusion was uncomfortable: roughly 60% of the billable hours his team logged were for work that AI tools could now handle in a fraction of the time. Not perfectly. Not without oversight. But well enough that the math on human-only execution no longer held.

He made the decision to restructure rather than simply cut. Over twelve months, he didn't renew contracts for eight developers as projects wrapped up. He invested heavily in retraining the six who remained, putting each of them through a structured AI tools curriculum he built internally. He promoted two to "AI Project Leads" with significant salary increases. He hired one specialist focused entirely on AI workflow design and quality review.

The result at the eighteen-month mark: seven people total, a payroll of $1.1 million, and the ability to take on roughly three times the number of concurrent projects as before. Revenue held flat in year one while the transition was messy, then grew 34% in the following year as capacity expanded without headcount costs rising proportionally. Profit margins moved from 19% to 38%.

The part James talks about most is the client quality feedback. Because his team was spending far less time on execution and more time on strategy, architecture, and quality review, the final products improved. Clients were getting more thoughtful recommendations about what to build and why, not just faster delivery of whatever they asked for. Referral rates went up. Two enterprise clients expanded their relationships into ongoing retainers, which is the highest-margin work an agency can land.

James also made a point I think is underappreciated: the transition was harder on him emotionally than it was technically. He'd built relationships with developers over years. Letting people go, even through attrition, felt like a betrayal of commitments he'd made implicitly. He handled it by being transparent with his team early about the direction, giving people a real opportunity to adapt, and being generous with severance and references for those who moved on. The people who stayed knew why they were staying and what was expected of them.

If you're running an agency or a services business, Meridian's trajectory is probably the most directly applicable model you'll find right now. The economics are almost identical across every similar business I've talked to.

The Necessary Counterpoint

Some companies genuinely need traditional software teams. If you're building infrastructure for AI models, working on real-time distributed systems, or solving problems at the frontier of computer science, you need deep specialists. AI code generation tools still struggle with novel algorithmic challenges, low-level systems programming, and domains where training data is sparse.

A 2024 analysis by Google DeepMind found that while AI excels at generating common software patterns, its performance drops significantly on tasks requiring novel reasoning or deep domain-specific logic. The gap is closing, but it hasn't closed yet.

But most growth-stage companies aren't operating at that frontier. They're building apps. SaaS products. Internal tools. E-commerce platforms. The kind of software where AI code generation performs exceptionally well because the patterns are well-established and extensively represented in training data.

For those companies, the traditional hiring playbook represents measurable waste. You're paying for human hours to perform work that machines now handle faster and, in many cases, with fewer bugs in initial output.

What This Means Going Forward

If you're about to hire your first developer, pause. Consider hiring an AI-fluent technical leader instead. Someone who can prototype with AI and validate your ideas before you commit to building a full team. The cost of testing an idea has dropped by an order of magnitude. Your hiring strategy should reflect that.

If you already have a team, audit how much of their time goes toward work AI could handle. Research from Google's engineering productivity team suggests that up to 30% of developer time is spent on code that could be reliably generated by current AI tools. Then figure out how to shift your team's focus to higher-value work. Strategy over syntax. Architecture over implementation.

If you're a developer reading this and feeling defensive, that tension is worth sitting with. The question is no longer whether AI will replace coding tasks. GitHub's data already shows it happening at scale. It's whether you'll evolve your skills or keep insisting the old model still holds. History suggests that professionals who adapt to new tools early capture disproportionate value. Those who resist tend to get displaced not by the technology itself, but by peers who adopted it.

The companies winning right now? Not the ones with the largest engineering headcounts. They're the ones who figured out how to combine AI leverage with strategic thinking to build products that actually solve customer problems.

Sarah finally made the switch. She kept three of her twelve developers. The ones who could think strategically. She trained them on AI tools. Her burn rate dropped by 60%. Her shipping velocity doubled. Customer satisfaction scores went up because the team was iterating on feedback instead of fighting internal complexity.

Turns out she never needed a traditional software team at all. She needed people who could solve problems and knew how to use the best tools available. A fractional Chief AI Officer can help you make this same transition without the costly trial and error.

The best tools just happen to be AI now. And the organizations that internalize this shift early will define the next era of software development. The rest will spend years and millions learning what Sarah learned the hard way.

Frequently Asked Questions

A fractional Chief AI Officer (CAIO) is a senior AI strategist who works with your company on a part-time or project basis rather than as a full-time executive. They bring the same strategic AI leadership that large enterprises pay $300K+ for, but at a fraction of the cost. A fractional CAIO helps you identify where AI creates real business value, build or restructure your technical team for AI-native workflows, avoid expensive mistakes in tool selection and implementation, and develop an AI roadmap that aligns with your actual business goals rather than chasing hype.

Start by auditing what your team actually builds day to day. If more than 40% of their sprint work is CRUD operations, UI components, API integrations, or writing tests for existing logic, you're in the zone where AI tools deliver strong results. The clearest signal is if your team regularly describes their work as "repetitive" or complains about time spent on boilerplate. That's work AI tools were built for. If your team spends most of their time on novel algorithmic problems, hardware-adjacent code, or deep domain-specific logic with no established patterns, AI tools will help at the margins but won't transform your model as dramatically. Most growth-stage companies fall squarely in the first category, even if they don't realize it.

Based on real-world comparisons, most growth-stage companies see 50% to 65% reduction in engineering costs in the first year. A traditional five-person team costs roughly $1.1M per year when you factor in salaries, benefits, recruiting, and overhead. An equivalent AI-native team of two to three people runs $350K to $415K and typically matches or exceeds the feature output. The savings compound over time because AI-native teams accumulate less technical debt, require less coordination overhead, and scale output by adopting better tools rather than adding headcount.

The single most predictive interview exercise is a live demonstration. Give candidates a real business problem from your company and thirty minutes. Ask them to use AI tools to produce a working prototype or technical specification. You're not evaluating the final output on technical elegance. You're watching how they break down a problem, how they construct prompts, how they evaluate and correct AI output, and whether they can articulate what the AI got wrong and why. Candidates who treat AI as an autocomplete tool will underperform. The ones who treat it like a very fast junior developer they need to supervise and direct are the ones you want. Also check their GitHub or portfolio specifically for projects built primarily with AI assistance.

Track four metrics before and after the transition. First, cost per shipped feature: divide total engineering spend by the number of features or user stories delivered per quarter. Second, time to first ship: measure how long it takes from project kickoff to a working product in front of real users. Third, defect rate: track bugs per release or per thousand lines of code. Fourth, team utilization on new work: what percentage of engineering time goes toward building new capabilities versus maintaining existing code. Most companies that make the switch see cost per feature drop 40% to 60%, time to first ship shrink from months to weeks, and new-work utilization jump from roughly 40% to over 70%.

This is a real concern worth taking seriously. AI tools are good at generating functionally correct code but have documented weaknesses in security-sensitive contexts. A Stanford 2023 study found insecure code suggestions in roughly 40% of security-relevant scenarios from Copilot. That doesn't mean you can't use AI for security-adjacent code. It means you can't skip the review step. In practice, AI-native teams handle this by establishing clear rules: any code touching authentication, authorization, cryptography, data validation at trust boundaries, or financial transactions gets reviewed by a human with a security background before it ships. AI handles the first draft, humans handle the adversarial review. That process is still faster than traditional development because the first draft is already 80% of the way there.

No, and waiting longer only makes the gap wider. Companies that started the transition in 2023 and 2024 already have a significant competitive advantage, but the tools are improving so fast that teams starting today can close that gap quickly. The key is starting with your existing team rather than replacing them. Identify your most adaptable developers, invest in structured AI tool training, and give them real projects to practice on. Most teams reach meaningful productivity gains within 8 to 12 weeks of serious adoption. The developers who struggle most are those who view AI as a threat to their identity rather than a tool that makes their judgment more valuable.

It depends almost entirely on how you handle the communication and the process. Teams that feel blindsided or replaced tend to disengage fast, and disengaged developers can do a lot of damage to a codebase and a culture in a short period of time. Teams that are brought in early, given real opportunities to learn new tools, and shown a path to higher-value work tend to adapt better than most leaders expect. Microsoft Research data showed higher satisfaction among developers using AI tools, primarily because AI absorbs the work they liked least. Frame the transition as an upgrade to the team's capabilities, not a reduction of headcount, and back that framing up with actual investment in training.

AI-native development works exceptionally well for SaaS products, web applications, e-commerce platforms, internal tools, API integrations, mobile apps, and data dashboards. These projects rely on well-established patterns that AI tools handle reliably. The approach is less suited for novel algorithm research, low-level systems programming (kernel drivers, embedded firmware), real-time distributed systems, and security-critical cryptographic implementations. Most growth-stage companies build products that fall squarely in the first category, even if the founders believe their technical challenges are more complex than they actually are.

From first hire to a functioning, productive AI-native team: six to twelve weeks if you're starting small and deliberate. The AI Engineering Lead is your first and most important hire. Once they're in place, they can help you identify the next role and structure the workflow. The mistake most founders make is trying to hire all roles simultaneously before the first person is oriented. Your first AI-native hire needs time to figure out what the workflow looks like for your context before you build around it. Resist the urge to staff up fast. The leverage in this model comes from the tools, not from headcount, and adding people before the workflow is solid just creates the coordination overhead you were trying to escape.

The most effective AI coding tools in 2025 include Cursor (an AI-first code editor), GitHub Copilot (inline code suggestions), Claude and ChatGPT (for architectural planning and code generation), and v0 (for rapid UI prototyping). The specific tools matter less than how your team uses them. The biggest productivity gains come from developers who treat AI as a fast junior engineer that needs supervision and direction, not as an autocomplete feature. Start with one tool, build proficiency, then expand. Most AI-native teams use two to three tools in combination depending on the task.

Yes, and sooner than feels comfortable. The companies I've seen try to obscure this end up in awkward conversations later when clients figure it out on their own. The framing that works is straightforward: you use AI tools the same way companies use compilers, frameworks, and cloud infrastructure. AI accelerates execution. Your team provides the judgment, architecture, quality review, and strategic direction. Most clients care about outcomes, not implementation details. They want their product built reliably, on time, and within budget. Lead with outcomes. When clients ask about the tools specifically, be honest and explain the oversight process.

It's the difference between someone who learned to drive in an automatic versus someone who actually understands how an engine works. A traditional developer using Copilot is still thinking in terms of writing code and using AI as a faster autocomplete. An AI-fluent technical leader thinks in terms of systems and outcomes. They start with the business problem, break it into specifications, and use AI to generate entire working components. They evaluate AI output for architectural fitness, not just whether it compiles. The traditional developer with Copilot might be 30% faster. The AI-native leader might replace the need for three or four traditional developers entirely. Different category of impact.