The AI Automation ROI Nobody Talks About

Everyone's Measuring the Wrong Thing

Most conversations about AI automation start and end with the same question. "How much money will we save?"

Fair enough. Cost savings are easy to measure. You had five people doing a task, now you have two. The math is simple. But if cost savings are the only thing you're measuring, you're missing the bigger picture by a wide margin.

The real returns from AI automation show up in three places that rarely make it into the pitch deck. Speed, accuracy, and decisions you literally could not make before.

I've been deploying AI systems across mid-market companies for over two years now, and the pattern is consistent. The CFO asks about headcount reduction. The CEO asks about competitive advantage. The CEO is asking the right question.

Speed Is Not Just "Faster." It Changes What Is Possible.

When a process that took two weeks now takes fifteen minutes, you save time, yes. But more importantly, you change the nature of the work itself.

A 2025 report from McKinsey found that companies using AI to accelerate workflows saw revenue gains of 10-15% on top of any cost reductions. The speed advantage didn't just make existing work cheaper. It opened up entirely new ways to operate. (McKinsey, "Superagency in the Workplace," January 2025)

Think about what happens when you can analyze customer feedback in real time instead of quarterly. You catch problems in days instead of months. You spot trends while they're still useful. That's not a cost story. That's a competitive advantage story.

According to Deloitte's 2025 State of AI in the Enterprise report, 74% of organizations that reported high AI ROI pointed to speed-to-insight as a primary driver, not headcount reduction. (Deloitte, "State of AI in the Enterprise," 2025)

Where speed creates the most value

I've seen the speed advantage play out most dramatically in three areas:

Customer support triage. A mid-market e-commerce company I worked with was taking 4-6 hours to route support tickets to the right team. AI agents now classify, prioritize, and route tickets in under 30 seconds. Resolution time dropped by 62%. That's not a cost saving. That's customers getting answers before they have time to write a bad review.

Financial close processes. Month-end close at a 200-person company used to take 8-10 business days. AI-assisted reconciliation, anomaly detection, and report generation cut that to 3 days. The CFO didn't care about the labor savings. She cared that she had accurate financials a week earlier, which meant better decisions on cash management and vendor negotiations.

Competitive intelligence. Manually tracking competitor pricing, product launches, and market positioning used to be a quarterly research project. Now AI agents monitor hundreds of sources continuously and flag changes within hours. One client caught a competitor's pricing mistake and adjusted their own positioning within 48 hours, winning a deal worth $340,000.

Accuracy Compounds Quietly

Bad data leads to bad decisions. Everyone knows this. But most companies underestimate how much inaccuracy is baked into their daily operations.

Manual data entry has an average error rate of about 1-4%. That sounds small until you realize it compounds across every report, forecast, and decision built on that data. AI automation can cut errors to near-zero. In many cases it eliminates entire categories of mistakes.

A 2025 Harvard Business Review article noted that AI-assisted forecasting improved prediction accuracy by 30-50% in supply chain operations compared to traditional methods. The authors argued that accuracy improvements had a larger financial impact than labor savings in most cases, because better predictions meant less waste, fewer stockouts, and smarter capital allocation. (Harvard Business Review, "AI-Powered Forecasting," 2025)

The hidden cost of inaccuracy

Accuracy doesn't show up as a single line item. It shows up everywhere. Fewer returns. Better inventory management. More reliable financial projections. Less time fixing things that should not have been broken in the first place.

Here's a real example. A parts distribution company I worked with had a 3.2% error rate in their order fulfillment process. That translated to roughly 960 incorrect shipments per month out of 30,000 orders. Each incorrect shipment cost an average of $47 in return shipping, restocking, and customer service time. That's $45,120 per month, or $541,440 per year in errors alone.

After deploying AI-assisted order verification (a Tier 3 local model that cross-checks orders against inventory, customer history, and shipping parameters), the error rate dropped to 0.4%. Monthly error costs went from $45,120 to $5,640. The system cost $800 per month to run.

That's a 12:1 ROI on accuracy alone. No headcount changed. Nobody got fired. The same people just stopped spending 20% of their day fixing preventable mistakes.

The compounding effect

What makes accuracy improvements so powerful is that they compound across every downstream process. When your order data is 99.6% accurate instead of 96.8%, your inventory forecasts get better. When your inventory forecasts are better, your purchasing decisions improve. When your purchasing is smarter, your cash flow improves. When your cash flow is healthier, your growth options expand.

One fix at the top of the data chain cascades through the entire business. I've seen companies trace a single AI accuracy improvement through five layers of downstream impact. The original ROI calculation never captured most of it.

The Decisions You Could Not Make Before

This is the part that gets overlooked the most.

Before AI tools became accessible, certain types of analysis were only available to companies with dedicated data science teams. If you wanted to run a regression analysis on customer churn, segment your audience by behavioral patterns, or predict which deals in your pipeline were most likely to close, you needed specialized talent.

Now you don't.

Gartner's 2025 research found that by the end of 2025, 70% of new analytics workflows would be generated by non-specialists using AI-powered tools. (Gartner, "Top Strategic Technology Trends for 2025")

A marketing manager can now ask an AI tool to analyze 50,000 customer interactions and surface the patterns that matter. A small business owner can build a predictive model for seasonal demand without writing a line of code. A sales team can score leads based on dozens of behavioral signals instead of gut feeling.

You can now be your own data scientist without actually being a data scientist.

Real examples of new decision-making capability

Pricing optimization. A SaaS company with 2,000 customers had never done systematic pricing analysis. They knew their pricing was "probably wrong" but didn't have a data science team to figure out what "right" looked like. We deployed an AI agent that analyzed usage patterns, churn data, and competitive pricing. Within three weeks, it identified that 23% of customers were on plans that significantly undervalued their usage. A pricing adjustment on renewals generated $180,000 in additional annual revenue. That analysis would have required a dedicated pricing analyst before AI. Now it runs automatically every quarter.

Churn prediction. A B2B services firm had no way to predict which clients were at risk of leaving. They found out when the client sent a cancellation notice. We built an AI model that analyzed email sentiment, support ticket frequency, usage patterns, and payment timing. It now flags at-risk accounts 45-60 days before they would typically churn, giving the account management team time to intervene. First-year save rate: 34% of flagged accounts retained.

Talent gap analysis. An HR director at a 400-person company wanted to understand which skills were missing from their workforce relative to their strategic plan. Previously, that would have required an expensive consulting engagement. An AI agent analyzed job descriptions, performance reviews, project outcomes, and industry benchmarking data. It produced a skills gap report in two hours that identified three critical capability gaps the leadership team hadn't recognized.

In a 2025 MIT Sloan Management Review study, companies that used AI for novel decision-making (not just process automation) were 2.4x more likely to report above-average profitability than those focused on cost reduction alone. (MIT Sloan Management Review, "AI in Business Gets Real," 2025)

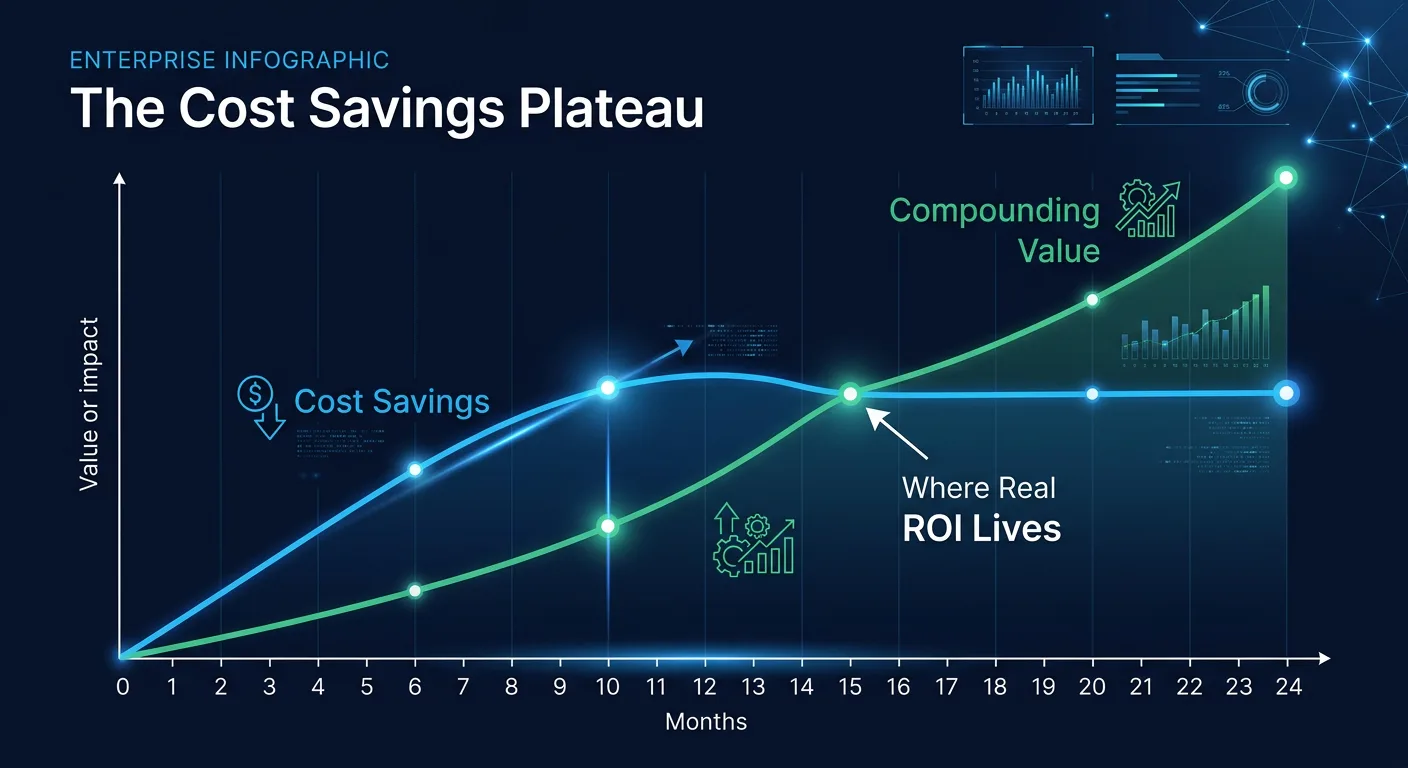

The Cost Savings Trap

None of this means cost savings don't matter. They do. But when cost reduction is your primary lens for evaluating AI, you end up making conservative choices. You automate the easy stuff. You replace the cheapest labor first. And you completely miss the high-value opportunities hiding in plain sight.

Forrester's 2025 AI Predictions report warned that organizations overly focused on cost-based ROI were 60% more likely to stall their AI initiatives within 18 months. The reason? Cost savings plateau quickly. Once you've automated the obvious tasks, the next round of savings gets harder and smaller. But speed, accuracy, and new decision-making capabilities keep compounding. (Forrester, "Predictions 2025")

The plateau problem

I see this pattern repeatedly. A company automates customer support ticket routing and saves $120,000 per year. They celebrate. Then leadership asks, "What's next?" The team looks for the next cost-saving automation, finds something worth $40,000, implements it, and then the next one is worth $15,000. The returns shrink with each iteration because the easy wins are gone.

Meanwhile, the speed and accuracy improvements from that first automation are quietly generating far more value. Faster ticket resolution is improving customer satisfaction scores, which is reducing churn, which is worth more than the original labor savings. But nobody is measuring that because the ROI framework only looks at costs.

How to avoid it

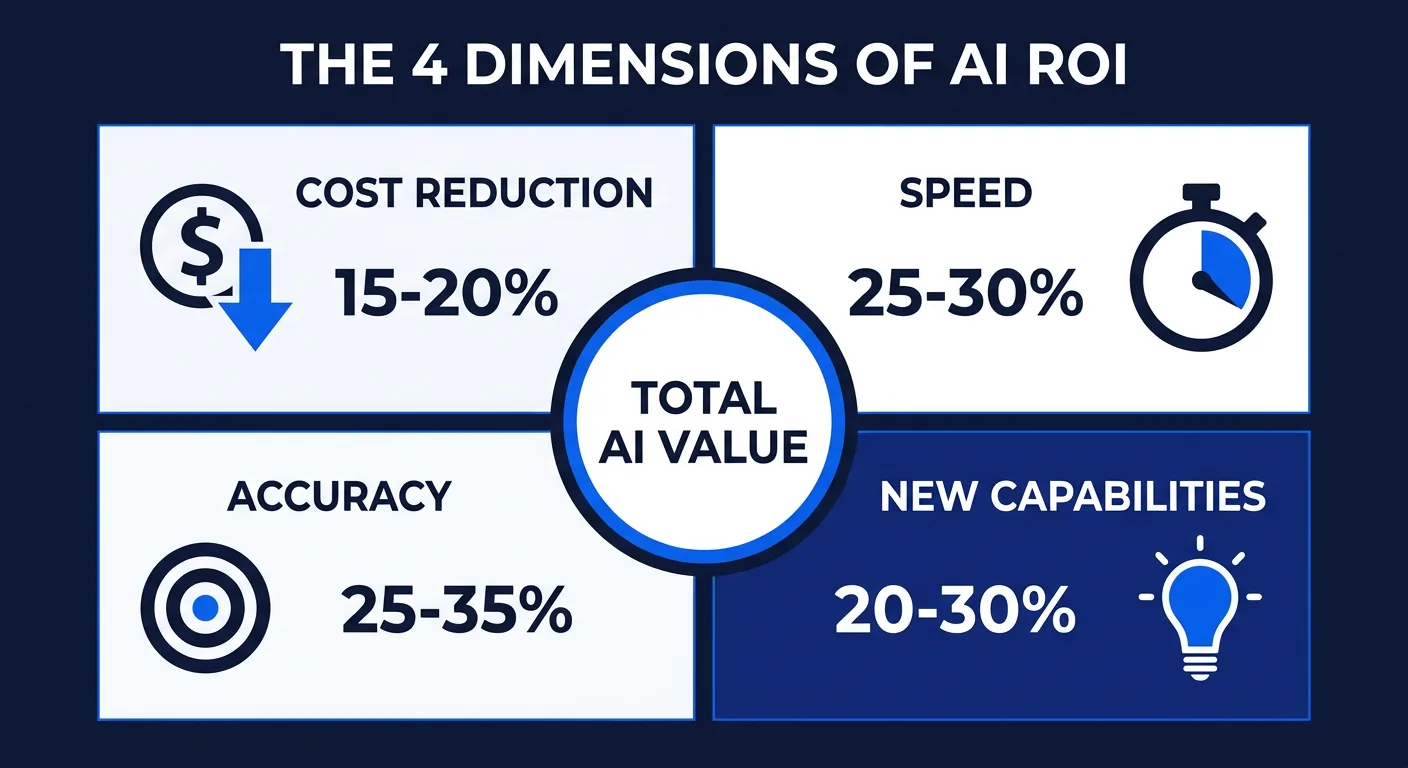

The fix is simple in concept but requires discipline in practice. When you evaluate an AI project, measure four things, not one:

Metric | What it captures | Example |

|---|---|---|

Direct cost reduction | Labor and operational savings | $120,000/year in support costs |

Time-to-decision improvement | Speed of analysis and action | Month-end close reduced from 10 days to 3 |

Error rate reduction | Accuracy and downstream quality | Order errors from 3.2% to 0.4% |

New capability value | Decisions you could not make before | Churn prediction saving 34% of at-risk accounts |

Most companies only measure the first row. The other three rows typically represent 60-80% of the total value.

Why Mid-Market Companies Get the Best AI ROI

There's an irony in the AI ROI conversation that nobody discusses. The companies getting the best returns are not the Fortune 500 enterprises with massive AI budgets. They're mid-market companies with 200-2,000 employees and $50M-$500M in revenue.

Why? Three reasons.

Lower baseline efficiency. Large enterprises have spent decades optimizing their processes. There's less room for improvement. Mid-market companies typically have more manual processes, more tribal knowledge, and more inefficiency waiting to be captured. The gap between "current state" and "AI-optimized state" is wider, which means the ROI is bigger.

Faster decision-making. A mid-market CEO can approve an AI deployment in a week. An enterprise CTO needs six months of committee reviews, security audits, and procurement processes. Speed of adoption directly affects speed of returns. I've seen mid-market companies achieve in 90 days what enterprises take 18 months to implement. A structured 90-day CAIO roadmap makes this repeatable.

More tangible impact per project. When a $2 billion company saves $500,000, it barely registers. When an $80 million company saves $500,000, the CFO notices. The same AI deployment creates more strategic impact at mid-market scale because it represents a larger percentage of revenue and profit.

The challenge for mid-market companies isn't whether AI will deliver ROI. It's getting started without the internal expertise that large enterprises have. That's exactly why the fractional CAIO model works. You get the expertise without the $300,000+ salary, and you get results in 90 days instead of 18 months.

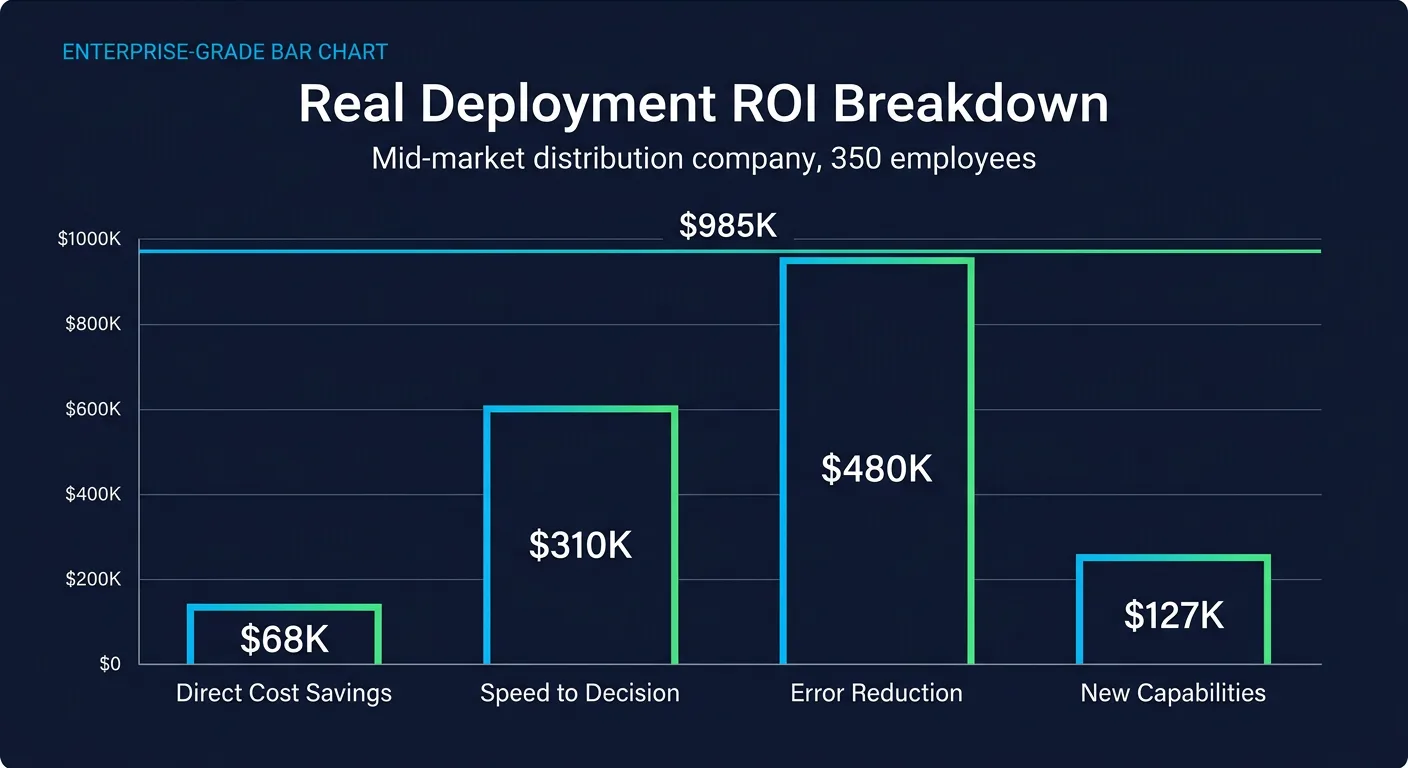

The Real Math: A Complete ROI Example

Let me walk through a real deployment to show how these four dimensions work together.

Company: Mid-market distribution company, 350 employees, $85M revenue.

AI deployment: Multi-agent system handling order processing, inventory forecasting, and customer communication.

Total implementation cost: $42,000 (infrastructure, configuration, 90 days of fractional CAIO time).

Monthly operating cost: $1,200 (inference costs plus monitoring).

Year 1 results by dimension:

Direct cost reduction: Two part-time data entry roles consolidated. Annual saving: $68,000.

Time-to-decision: Inventory reorder decisions that took 2 days now happen in 15 minutes. Resulted in 23% reduction in stockouts. Revenue impact from reduced stockouts: $310,000.

Error rate: Order accuracy improved from 96.8% to 99.6%. Annual savings from reduced returns, reshipping, and customer service: $480,000.

New capabilities: AI-powered demand forecasting identified three seasonal patterns the team had never recognized. Adjusted purchasing saved $127,000 in excess inventory costs.

Total Year 1 value: $985,000

Total Year 1 cost: $56,400

ROI: 17.5:1

If they had only measured the direct cost reduction ($68,000), the ROI would have looked like 1.2:1. Barely worth doing. The real value was 14x larger than the cost savings alone.

How to Build an AI ROI Framework That Actually Works

If you're a mid-market leader evaluating AI, here's the framework I use with every client. It takes about two weeks to set up and then runs continuously.

Step 1: Baseline everything before you touch anything

Before deploying any AI system, understand the AI stack landscape and measure the current state of every process you plan to automate. How long does it take? What's the error rate? How many people touch it? What decisions depend on its output? What's the downstream cost when it goes wrong?

Most companies skip this step because it's tedious. Then six months later, when the CEO asks "what did we get for our AI investment?", nobody can answer because there's no baseline to compare against. Spend the two weeks. Document everything. Future you will be grateful.

Step 2: Identify the four value streams for each use case

For every potential AI deployment, map out where value will come from across all four dimensions:

Direct cost reduction. Will this reduce labor, licensing, or operational costs? By how much?

Speed improvement. What decisions or processes will get faster? What is that speed worth in terms of revenue, customer satisfaction, or competitive positioning?

Accuracy improvement. What error rates will decrease? What's the downstream cost of those errors today?

New capabilities. What analyses, predictions, or optimizations become possible that weren't possible before?

If a project only scores on one dimension, it might still be worth doing, but you shouldn't expect transformative results. The best AI deployments score on three or four dimensions simultaneously.

Step 3: Measure continuously, not quarterly

Traditional ROI reviews happen quarterly or annually. AI ROI should be tracked weekly, at minimum. The systems I deploy include real-time dashboards that track all four value dimensions. When a metric drifts in the wrong direction, you catch it in days, not months.

This continuous measurement also helps with the political challenge of AI adoption. When the skeptics in your organization question whether AI is working, you can show them a live dashboard instead of a quarterly report that's already six weeks old.

Step 4: Reinvest speed savings into capability building

This is where most companies stall. They achieve the initial ROI, declare victory, and stop. The companies that pull ahead take the time their teams saved through AI automation and redirect it toward building new capabilities.

Your customer support team saved 200 hours per month? Take 100 of those hours for proactive customer outreach, market research, or product feedback analysis. The compounding effect of reinvesting AI savings into growth activities is where the real long-term value lives.

I worked with a logistics company that tracked this systematically. Their operations team saved 160 hours per month after automating shipment tracking and exception handling. Instead of cutting headcount, the COO redirected 80 of those hours toward building direct relationships with their top 50 accounts. Within six months, account retention improved by 18% and upsell revenue increased by $220,000 annually. The automation paid for itself in cost savings alone, but the reinvestment multiplied the return by 4x.

That kind of compounding does not show up on day one. You have to plan for it from the beginning, which means deciding before you deploy where the freed-up capacity will go. Companies that leave this to chance end up with teams that fill the saved time with low-value work, and the ROI story stalls.

The Companies Getting It Right

The companies pulling ahead with AI aren't winning because they cut costs. They're winning because they move faster, make fewer mistakes, and see things their competitors can't see yet.

The most valuable thing AI automation gives you isn't a smaller budget. It's better decisions, made faster, by people who aren't data scientists but can now think like one.

That's the ROI nobody talks about. And it's the one that matters most.

Common Mistakes in AI ROI Calculation

Before I close, here are the five most common mistakes I see mid-market companies make when calculating AI ROI:

Mistake 1: Only counting labor savings. This is the big one. Labor savings are real but they're usually the smallest component of total value. Speed, accuracy, and new capabilities together typically represent 70-85% of total ROI.

Mistake 2: Ignoring implementation costs. Some companies compare annual AI savings against monthly subscription costs and declare a 50:1 ROI. Be honest about total costs including implementation time, training, change management, and ongoing maintenance. An honest ROI calculation is more credible and more useful for future investment decisions.

Mistake 3: Measuring too early. Most AI deployments need 60-90 days to reach full operational effectiveness. Measuring ROI at 30 days is like judging a marathon runner after the first mile. Wait until the system is through the learning curve and operating at steady state.

Mistake 4: Not measuring at all. Surprisingly common. Companies deploy AI, feel like it's working, but never quantify the impact. When budget season comes and someone asks for hard numbers, there's nothing to show. Baseline measurement before deployment is not optional.

Mistake 5: Comparing against the wrong alternative. The question isn't "AI versus the status quo." The status quo isn't static. Your competitors are also adopting AI. The real comparison is "AI versus what happens if we do nothing while our competitors do something." That reframe changes the urgency of every ROI conversation.

If you want to understand what AI could actually do for your specific business (beyond the obvious cost cuts), that's exactly the kind of analysis a fractional Chief AI Officer runs in the first 30 days.

Frequently Asked Questions

In real deployments, mid-market companies typically see 10:1 to 20:1 ROI when measuring across all four value dimensions: direct cost reduction, speed improvement, accuracy gains, and new decision-making capabilities. Companies that only measure cost savings see 1:1 to 3:1 because they are missing 70-85% of the total value.

Most AI deployments need 60-90 days to reach full operational effectiveness. Initial results (cost savings, speed improvements) typically appear within 30 days. Accuracy improvements and new capability value compound over 6-12 months. Measuring ROI before 60 days gives an incomplete picture.

Three reasons: lower baseline efficiency (more room for improvement), faster decision-making (deploy in weeks, not months of committee reviews), and more tangible impact per project (the same $500K saving represents a larger share of revenue at mid-market scale).

Only counting labor savings. Direct cost reduction typically represents just 15-20% of total AI value. Speed-to-decision improvements, error rate reductions, and new analytical capabilities together account for 70-85% of the real returns, but most companies never measure them.

For mid-market companies, a typical deployment costs $5,000-15,000 in infrastructure and 60-90 days of fractional CAIO time. Monthly operating costs run $500-2,000 for inference. Most deployments pay for themselves within 60-90 days when all four ROI dimensions are measured.